Warning: Contains spoilers. Go read Part 1 and Part 2 first.

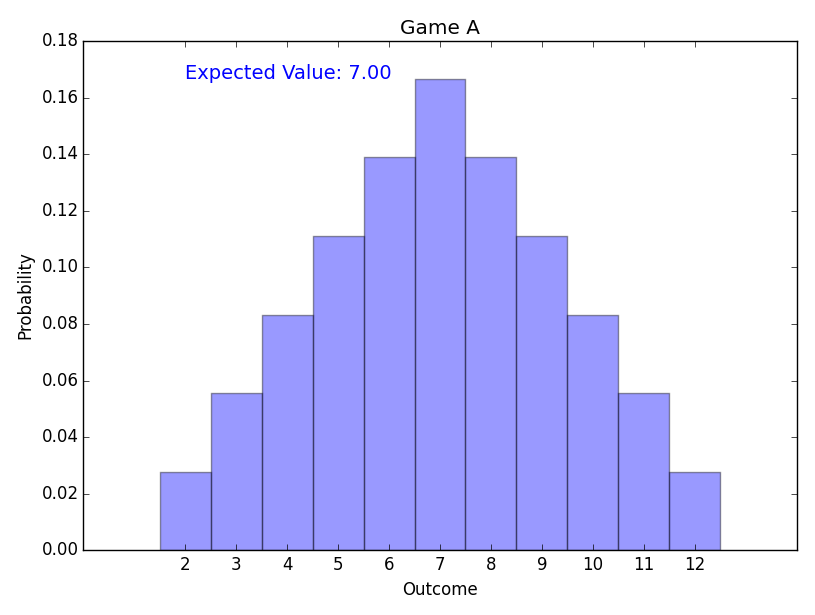

Game A

It is easy to work out the model for this game by hand, but remember my goal here is to exercise some code.

So, let’s see some output from the code. Here is the distribution of payouts.

The expected value is $7. That is the minimum a casino could charge. (A real casino has overheads and a profit motive, and would have to charge more.)

Easy.

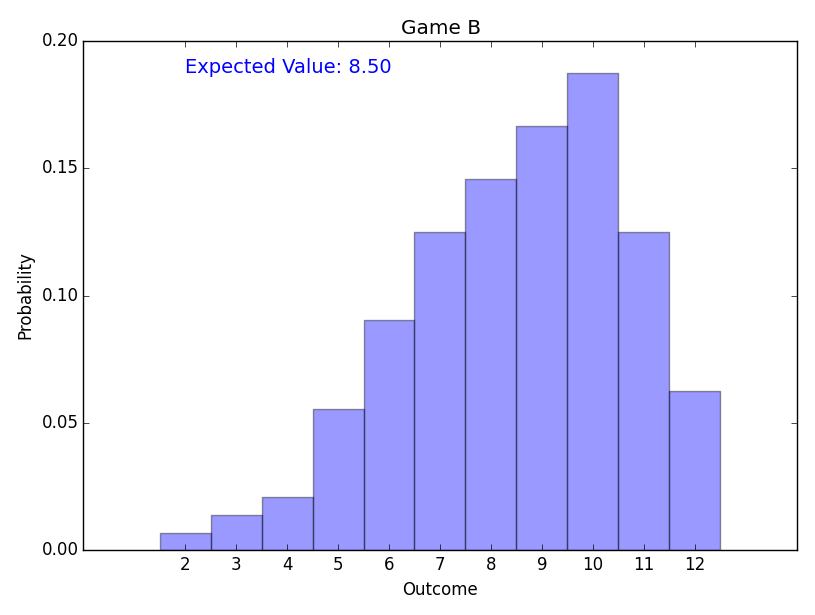

Game B

The Basic Strategy after the first roll is represented by the table below.

The number across the top of the table represents the higher of the two initial dice. The number down the side represents the lower. The entry in each cell is either 0 (Re-roll no dice), 2 (Re-roll both dice) or 1 (Re-roll the lower die only, or either if they are the same.)

| 6 | 5 | 4 | 3 | 2 | 1 | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 1 | 2 | 2 | 2 |

| 2 | 1 | 1 | 1 | 2 | 2 | |

| 3 | 1 | 1 | 1 | 2 | ||

| 4 | 0 | 0 | 0 | |||

| 5 | 0 | 0 | ||||

| 6 | 0 |

Note: Whenever I refer to Basic Strategy, this table above describes it.

(I assume it is obvious that the player should never choose to only roll the higher of the two dice, and never bothered to prove it.)

Here is the distribution of payouts.

The expected value of the optimal strategy is $8.50.

Now, that is what my code says, but my goal here was to test the code, so let’s check this out manually.

Consider just the red die.

There is a 50% chance of the initial roll being a 4, 5 or 6. These are above the expected value of a second roll, and should be kept. Each has the same chance of turned up, so the expected value from the red die in this scenario is the average, i.e. 5.

There is a 50% chance of the initial roll being a 1, 2 or 3. These are below the expected value of a second roll, and should be re-rolled. The re-roll has an expected value of 3.5.

The expected value of the red die is therefore (50% * 5) + (50% * 3.5) = 2.5 + 1.75 = 4.25.

By equivalent logic, the expected value of the blue die is also 4.25. The sum of both independent dice is expected to add up to 8.50, which matches the code. Yay!

Note that this analysis breaks the strategy into two separate concerns: Whether to re-roll the red die, and whether to re-roll the blue die. As explain in Part 2, this means I consider it uninteresting as a game.

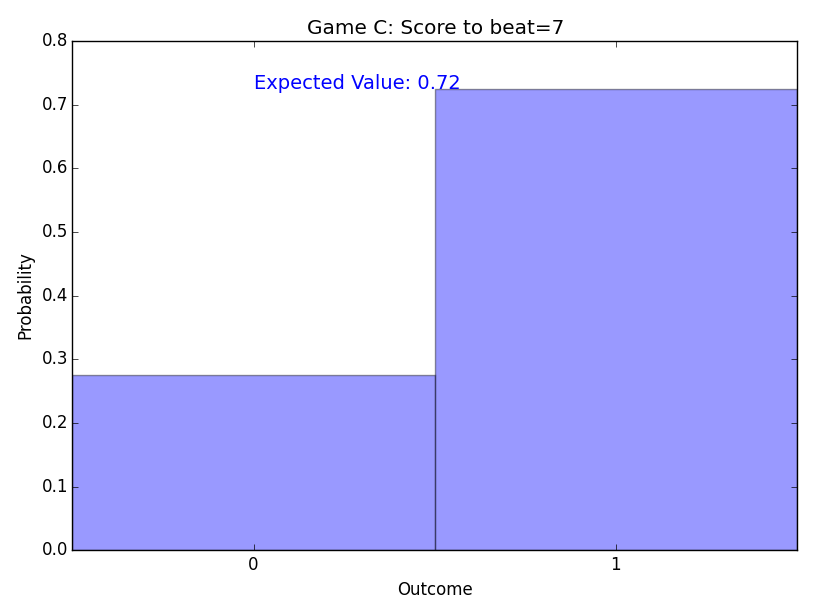

Game C

From here on in, the distribution charts become less interesting to look at, because the outcomes are merely “0” (i.e. lose) or “1” (i.e. win). This is the last one I’ll include.

The expected value is about $0.7245 – it should cost at least that much money to play.

I haven’t hand-checked that value, but it sounds about right. Testing becomes a lot slacker at this point. Don’t base a casino on these figures!

Note: The optimal strategy has changed.

| 6 | 5 | 4 | 3 | 2 | 1 | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 1 | 2 | 2 | 2 |

| 2 | 0 | 1 | 1 | 2 | 2 | |

| 3 | 0 | 0 | 1 | 2 | ||

| 4 | 0 | 0 | 0 | |||

| 5 | 0 | 0 | ||||

| 6 | 0 |

For example, rolling a 3 and a 5 means you now sit on the 3, where a 3 and a 3 means re-roll them both. So it is no longer playable by treating the dice separately. This game has become more interesting.

If we change the sign to say “Beat a 9 to win”, we get another interesting result:

| 6 | 5 | 4 | 3 | 2 | 1 | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 1|2 | 2 | 2 | 2 |

| 2 | 1 | 1 | 1|2 | 2 | 2 | |

| 3 | 1 | 1 | 1|2 | 2 | ||

| 4 | 0 | 1 | 1|2 | |||

| 5 | 0 | 0 | ||||

| 6 | 0 |

If you have a pair of 4s, you can’t sit, because you are certain to lose.

You can either try to roll just one of them again (for a 1/6 chance of getting a 6), or try rolling both of them again (for a 6/36 = 1/6 chance of getting a 4&6, 6&4, 5&5, 5&6, 6&5, or 6&6). [Note: This line was corrected from the original.]

Both strategies are equivalently good. So, optimal strategy doesn’t require you to split double 4s, but it permits it.)

For the record: The expected value for this variant is about 0.3981.

Game D

I imagine this game might be more fun to play, because of the added suspense.

The croupier has an advantage over the player in that the croupier wins, but the player has a much bigger advantage of being able to improve their score with a roll. The expected value is about 0.6256.

However, unsurprisingly, there is no change to the player’s strategy. They continue to play Basic Strategy, to simply optimise their score.

Game E

While the handicap drops the expected value of the game down to about 0.5098, I was surprised to find it didn’t affect the optimal strategy. Basic Strategy was still the way to play.

In fact, increasing the handicap from +1 to +3 doesn’t change the optimal strategy at all. I was expecting it to encourage more risky behaviour, being less accepting of scoring an 8, for example.

A handicap of +4 (i.e. beat the croupier by at least 5) finally makes a difference:

| 6 | 5 | 4 | 3 | 2 | 1 | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 2 | 2 | 2 | 2 |

| 2 | 1 | 1 | 2 | 2 | 2 | |

| 3 | 1 | 1 | 2 | 2 | ||

| 4 | 0 | 0 | 2 | |||

| 5 | 0 | 0 | ||||

| 6 | 0 |

By that stage, with an expected value of only about 0.1852, it becomes necessary to play more desperately, and re-roll 4s, unless they are paired with a 5 or 6.

Once again, the strategy involves looking at the combination of both dice, rather than each die individually, which makes it more interesting.

Game F

Note: The croupier is following Basic Strategy, which is the optimal strategy for Game B. It is not necessarily optimal strategy for Game F. We’ll come back to that in Game I.

The result of this change is that the croupier tends to get a higher score than Game D. This makes it harder on the player (as is reflected in the reduced expected value – down to 0.4343).

While the rules are largely symmetrical now, the croupier gets the advantage of winning in the case of ties, so it is not a “fair” game.

However, once again, I am surprised that this fairly drastic change isn’t enough to change the optimal strategy from Basic Strategy.

By gut feel alone, I would have expected a pair of 4s to be worth re-rolling.

A peak behind the scenes confirms that there isn’t a big difference in expected value between the different strategies for a double 4. However, the Basic Strategy is certainly proving to be far more stable against rule tweaks than I had predicted.

Game G

The game is starting to get more interesting for the player. While croupier still gets the advantage of winning ties, but the player gets the larger advantage of knowing what target needs to be beaten, which encourages more conservative play when the croupier scores low, and more risky play (e.g. rerolling 4s and even 5s) when the croupier gets a higher score.)

Having the ability the know what score you need to target certainly helps the player, with the expected value back at about 0.6129.

The strategy becomes more complex, with different strategies required for different targets – I haven’t bothered to list them for space reasons. See Game C for a couple of examples.

Game H

This game has some analogy to BlackJack, where the players get to see the croupier’s first card before deciding their own strategy.

Unsurprisingly, the expected value of about 0.4422 is lower than seeing both dice (Game G), but higher than seeing neither (Game F).

My gut feel would be that the advantage would be greater than that.

I rationalise it to myself by thinking that if you see the croupier has a high score, you are already in trouble. If they show a very low score, you are back to playing game D, so it is in the right ballpark. But I would still have expected a figure above 0.50.

Game I

What is the optimal play for the croupier to make life worst for the player no matter how the player adapts their strategy to match the croupiers strategy?

This is it! This is the goal I was working towards. It isn’t Expectimaxing, or even Minimaxing, but there is that single level of “Theory of Mind” there that makes it feel Game Theoryish.

Here is the result: The new strategy the croupier should follow:

| 6 | 5 | 4 | 3 | 2 | 1 | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 1 | 2 | 2 | 2 |

| 2 | 1 | 1 | 1 | 2 | 2 | |

| 3 | 1 | 1 | 1 | 2 | ||

| 4 | 0 | 0 | 0 | |||

| 5 | 0 | 0 | ||||

| 6 | 0 |

Wait… That’s the same as Basic Strategy, and the expected value is the same as Game H.

Even though the croupier knows the player is going to see their first roll and use that information, there is no incentive for them to push a little harder and go for higher scores?

Dammit, Basic Strategy! You are too stable to be fun.

And I never did find a game that required double 4s to be split.

Comment by Julian on August 29, 2015

Correction made to Game C. (Conclusion was correct, but explanation got confused halfway through.) Thanks, Richard, for pointing out the error.

Comment by Julian on August 29, 2015

Richard’s other observation was that, in Game C, if I had gone up to “Beat a 10”, and looked closely, I would have seen that double 5s are optimally split.

(No re-roll is a guaranteed loss. Single re-roll gives 1 in 6 chance of getting a 6. Double re-roll gives 3/36 = 1 in 12 chance of getting a 5&6, 6&5 or 6&6. So, optimal strategy it to split the 5s.)

Comment by Jonathon Duerig on August 31, 2015

It is great to see you posting again.

One thing I didn’t think through when reading the original games post was that there is a big difference in Beat X games compared to Beat Croupier Whose Strategy Has Expected Value of X games.

It would be interesting to see what happens when the payoff function is the product of the dice rather than the sum. The basic strategy then involves rerolling just one die in a pair on double 4, 5, or 6. This rules change might have other interesting ripple effects as you get more complicated games as well. Maybe changing the rules like this is cheating, though. 🙂

-D